Job Scheduling

This information is applicable for IITKGP internal users. Other users in NSMapp, NSMext, etc., are requested to contact CDAC for information on storage, charging policy, and queuing priority.

Scheduler

PARAM Shakti uses Slurm 22.05.09 (open-source) as the workload manager for the HPC facility. The following partitions (queues) have been defined to meet different computational requirements.

| Partition | Min-Max Cores / Nodes per Job | Max Walltime | Priority | Comments |

|---|---|---|---|---|

| Shared | 1-36 cores | 03 days | 200 | Compute nodes only. Node sharing is allowed between different jobs. |

| Medium | 1-10 nodes | 03 days | 200 | Compute and high-memory nodes. Nodes are allocated exclusively; node sharing is not allowed. |

| Large | 1-10 nodes | 07 days | 10 | Compute and high-memory nodes. Intended for long-running jobs; node sharing is not allowed. |

| GPU | GPU nodes only. Configuration remains unchanged. | |||

Partition Details

- Shared Partition: Designed for serial and OpenMP jobs. Users may request a minimum of 1 core and a maximum of 36 cores. The maximum walltime is 3 days.

-

Medium Partition:

Suitable for single-node and multi-node jobs. Entire nodes are allocated

exclusively. Users may request between 1 and 10 nodes.

The parameter

--ntasks-per-node=40must not be changed. Maximum walltime is 3 days. - Large Partition: Similar to the medium partition but intended for longer jobs with a maximum walltime of 7 days. Due to its lower priority, this partition should be used only when longer walltime is required.

- GPU Partition: Dedicated to GPU-based workloads. Configuration remains unchanged.

Types of Nodes

-

Compute Nodes (cn):

The system comprises 384 compute nodes, labelled

cn001tocn384. These nodes are distributed across the shared and medium/large partitions based on historical usage and job queue demand. -

High-Memory Nodes (hm):

A total of 36 high-memory nodes, labelled

hm001tohm036, are available only in the medium and large partitions. These nodes are intended for memory-intensive workloads.

To request more than 4.3 GB of memory per core, users may specify either of the following in the job submission script:#SBATCH --exclude=cn[085-384]-

#SBATCH --mem-per-cpu=AA G(replace AA with a value between 4 and 18)

-

GPU Nodes (gpu):

The system includes 22 GPU nodes, labelled

gpu001togpu022. These nodes are accessible exclusively through the GPU partition. To request a GPU, include:

#SBATCH --gres=gpu:1

Storage Policy

⚠️ Important

Users must submit jobs from the /scratch/$USER directory.

| File System | Quota | Retention Period |

|---|---|---|

| /home |

Soft Limit: 40 GB Hard Limit: 50 GB *Except for UG students |

Unlimited |

| /scratch | Soft Limit: 2 TB |

60 days Files older than retention period will be deleted |

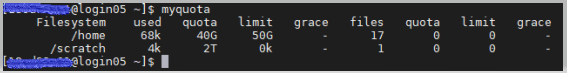

Users can check their /home and /scratch quota using

the myquota command.

Usage Instructions

/home/<username>/: Used for installing applications./scratch/<username>/: Used for project/research data.

Users are advised to maintain local backups of their data. Storage and charging policies are subject to change.

Data Safety

Users are advised to keep a backup of their data locally. Once the project/research work is completed, transfer the data from PARAM Shakti to your local system using the commands shown in the File Transfers section. Users' data stored on scratch directory on PS is NOT backed up or archived by the system administration. The PS administration is also not responsible for restoring damaged or lost files for users. Backing up and archiving data is the responsibility of the user and his/her Project Guide/Adviser.