DGX AI Cluster

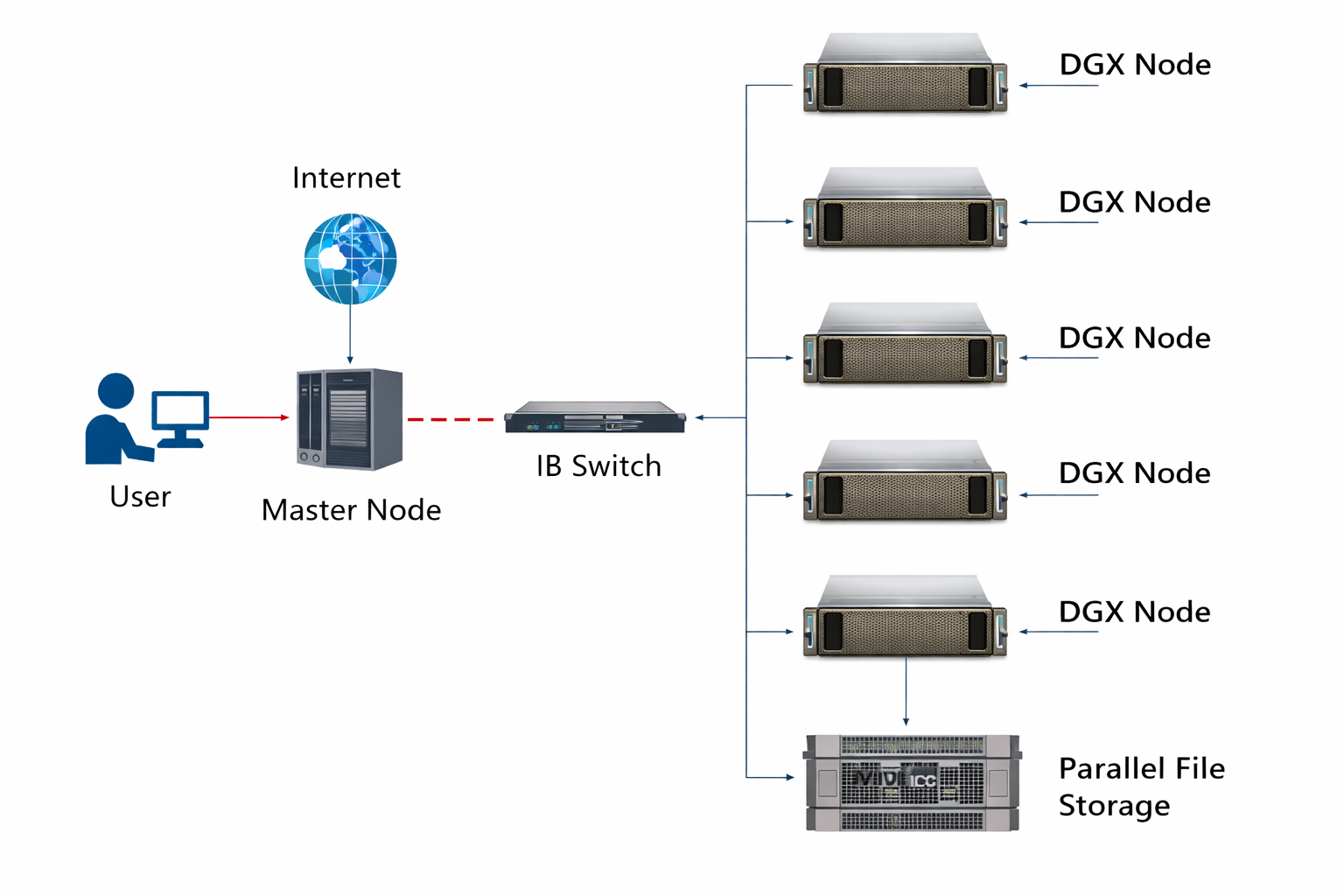

The DGX GPU Cluster at CCDS, IIT Kharagpur, is a state-of-the-art high-performance AI supercomputing infrastructure designed to support large-scale artificial intelligence training, deep learning research, advanced simulations, and data-intensive analytics. Built to meet the growing computational demands of modern research, the cluster delivers enterprise-grade performance, scalability, and reliability for cutting-edge scientific and engineering applications.

At its core, the system is powered by NVIDIA DGX H100 architecture, featuring eight NVIDIA H100 Tensor Core GPUs per node. These GPUs are interconnected via four NVSwitches, enabling full GPU-to-GPU interconnectivity within each node and ensuring ultra-high bandwidth, low-latency communication for accelerated AI model training and inference.

To further enhance data throughput and scalability, the cluster has been recently upgraded with a dedicated 1.1 PiB Parallel File System (PFS) storage infrastructure. This storage system is integrated using NVIDIA Quantum InfiniBand switching technology with 400 Gbps bandwidth, providing high-speed, low-latency communication between compute and storage layers. This architecture significantly improves performance for large datasets, distributed AI workloads, and multi-node training environments.

The DGX GPU Cluster represents a major step forward in strengthening IIT Kharagpur’s capabilities in AI research, high-performance computing, and data-driven innovation under the Centre of Computational and Data Sciences (CCDS).

Network Architecture

System Architecture

| Component | Specification |

|---|---|

| Master Node | Intel Xeon SKL G-6148 (40 Cores @ 2.4 GHz), 384 GB RAM, 5 TB Storage |

| Compute Nodes | 5 × NVIDIA DGX H100 Systems |

| GPU Configuration | 8 × NVIDIA H100 GPUs per node (NVSwitch Interconnect) |

Recent Infrastructure Enhancements (2026)

High-throughput distributed storage enabling large-scale AI model training and high-speed data access across compute nodes.

Ultra-low latency and high-bandwidth networking backbone connecting compute nodes and storage infrastructure.

Cluster Access

Login Command:

ssh <username>@gpucluster.iitkgp.ac.in

Partition Mapping:

- dgx1 → dgx1

- dgx2 → dgx2

- dgx3 → dgx3

- dgx4 → dgx4

- dgx5 → dgx5

Storage & Data Management

- 50 GB quota in /home/<username>

- 1 TB quota in /dgxx/<username>

- RAID directories mounted as /dgxx

cd /dgxx/<username>/

QOS Restrictions

| QoS Name | Max Jobs/User | Max CPUs | Min GPUs | Max GPUs | Max Wall Time |

|---|---|---|---|---|---|

| gpu2 | 2 | 56 | 1 | 2 | 72 Hrs (3 days) |

| gpu4 | 2 | 56 | 3 | 4 | 48 Hrs (2 days) |

| gpu6 | 1 | 112 | 5 | 6 | 24 Hrs (1 day) |

| gpu8 | 1 | 112 | 7 | 8 | 12 Hours |

User Guide

Important Job Submission Guidelines

- Jobs must be submitted to a single node (

#SBATCH --nodes=1). - The number of tasks per node (

--ntasks-per-node) and GPUs (--gres=gpu) must align with assigned limits. - Users must specify the correct QoS and partition in their job script.

Sample Submission Commands

sbatch /home/train_script.sh cd /home/<username> sbatch script.sh

Common Errors

Issue: GPU count requested exceeds QoS limits.

#SBATCH --qos=gpu2

#SBATCH --gres=gpu:4 # gpu2 allows max 2 GPUs

Fix: Match GPU count with selected QoS.

Issue: Requested time exceeds allowed QoS wall time.

#SBATCH --qos=gpu4

#SBATCH --time=72:00:00 # gpu4 max is 48 hours

Fix: Reduce wall time or select appropriate QoS.

Issue: Job submitted from compute node instead of master node.

sbatch /raid/<username>/train_script.sh # Incorrect

Fix:

cd ~

sbatch train_script.sh # Submit from home directory

Monitoring Tools

- sinfo – View partitions

- squeue – Monitor jobs

- df -h – Check storage mounts

- module avail – View available modules

Getting Started with Parallel Computing using MATLAB

on the Param Shakti HPC and DGX AI Cluster.

📄 Download Combined Document (PS & DGX AI)For additional documents and recordings from the workshop held in May 2022, please visit the link below:

🔗 View Workshop Materials